Author: Panagiotis Radoglou-Grammatikis (UOWM), Dimitris Asimopoulos (MINDS)

Our digital world is under constant threat, with cybersecurity challenges continuously evolving and cyber-attacks becoming more sophisticated and widespread. One of our primary defenses against these threats is the Intrusion Detection System (IDS), a vital tool in identifying and mitigating potential intrusions. The advent of artificial intelligence (AI) technologies, primarily machine learning (ML) and deep learning (DL), has given rise to AI-based intrusion detection systems, promising heightened threat detection and precision. But as with any advancement, there are drawbacks. The main topic of this post is the susceptibility of these AI-based systems to adversarial attacks, the potential consequences, and how the AI4CYBER project is working tirelessly to fortify the digital infrastructure.

Adversarial attacks are a rising concern in our digital defense systems. These are calculated, malicious attempts designed to trick or control ML/DL models by exploiting their inherent weaknesses. Performed by making almost imperceptible changes to the input data, these attacks can lead models to produce inaccurate or fake predictions. We can categorize these attacks based on the attacker’s knowledge and objectives. White-box attacks, for example, utilize knowledge of the model’s gradients to optimize perturbations and influence its predictions. Black-box attacks, conversely, involve limited model knowledge and create adversarial examples by observing input-output relationships. Also, grey-box attacks sit somewhere in the middle, with attackers having some understanding of the model’s architecture, but not its parameters.

AI4CYBER recognizes the critical importance of understanding adversarial attacks and the potential risks they pose to IDS. Focused on developing AI-based IDS, AI4CYBER has put considerable resources into an attack simulation tool named AI4SIM. This tool is built to explore and understand AI powered attacks such as adversarial attacks. By simulating a range of attack scenarios, AI4CYBER hopes to unearth and tackle the vulnerabilities present in AI-based security solutions.

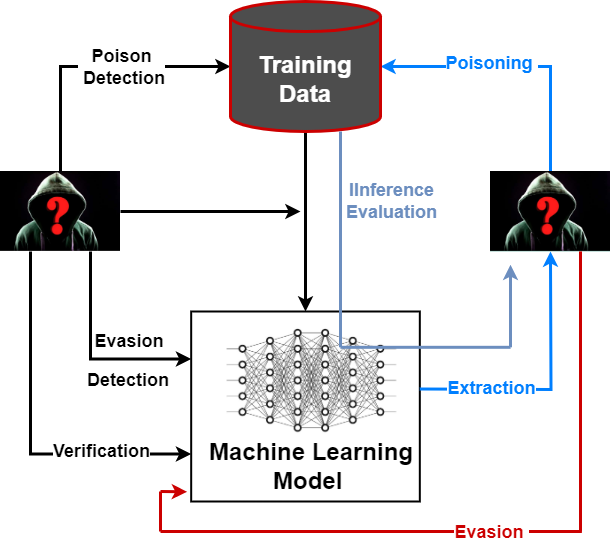

Figure 1 Evasion and Poisoning Attack

Two particularly worrying types of adversarial attacks against IDS are evasion and poisoning attacks as shown in Figure 1. Evasion attacks seek to manipulate input data in a way that circumvents the IDS’s detection mechanisms. Comprehending these attacks is crucial for creating models that can resist evasion, thus enhancing system security. Poisoning attacks, meanwhile, strike at the AI model during the training phase, compromising its integrity and potentially leading to misclassifications in real-world applications.

In response to these threats, AI4CYBER is developing state-of-the-art AI-based cybersecurity solutions that can withstand adversarial manipulations. As part of this mission, the project is investigating techniques such as adversarial training and model assembling. Adversarial training involves exposing the model to carefully crafted adversarial examples during training to boost its resilience. Model assembling combines several diverse models to form a more secure and reliable IDS.

One of the significant benefits of AI4SIM is its capacity to continuously monitor and update AI models. This enables timely identification and adaptation to emerging threats, ensuring that IDS stays one step ahead of evolving adversarial attacks.

The journey embarked upon by AI4CYBER aligns with the vital need to strengthen our digital infrastructure against adversarial attacks. By diving deep into the intricacies of these attacks and implementing innovative security measures, the project aims to create a more secure and robust cyber environment. Through continuous research and collaboration, AI4CYBER is striving to be at the cutting edge of cybersecurity solutions. Our collective mission is to shield our networks and systems from AI-based attacks and more specifically adversarial attacks.