Authors: Wissam Mallouli (MONTIMAGE), Ana Cavalli (MONTIMAGE), Eider Iturbe (TECNALIA), Erkuden Rios (TECNALIA)

AI-powered attacks include both attacks that use offensive AI to cause damage to victim systems as well as some categories of Adversarial Machine Learning (AML) attacks, for example, those AML attacks that are able to learn from the system they aim to defeat. For example, lagoDroid and EEE (the evolutionary packer)[1] are examples of two AML tools able to evade malware detection systems, by crafting variants of malware source-code and binary files, respectively. Some known AI-fuelled attacks include phishing, malware/ransomware, supply chain attacks, and DDoS. However, due to the sophistication of the techniques used by these attacks, detecting them is challenging and so it is the preparation of the targeted systems to counter them.

The simulation of advanced and AI-powered attacks is key to understand the security posture of a system. Defensive adversarial ML can be used for attack simulation among other security tasks such as countermeasure designs, noise detection and evasion. Prominent AI techniques for adversarial ML include generative adversarial networks (GANs) and reinforcement learning.

The attack generation concept involves the systematic creation and emulation of various types of attacks or malicious activities in order to evaluate the resilience, effectiveness, and vulnerabilities of a system or network. By generating attacks, security researchers and professionals can assess the system’s defences, identify potential weaknesses in the system, and test the responsiveness of security measures. This concept plays a crucial role in proactive security testing and helps in the development of robust security strategies by enabling the detection and mitigation of potential threats before they can be exploited by real attackers. Additionally, the generation of realistic attack scenarios allows organisations to enhance incident response capabilities and develop appropriate countermeasures to protect critical assets and maintain the integrity and confidentiality and availability of their systems.

Ambition:

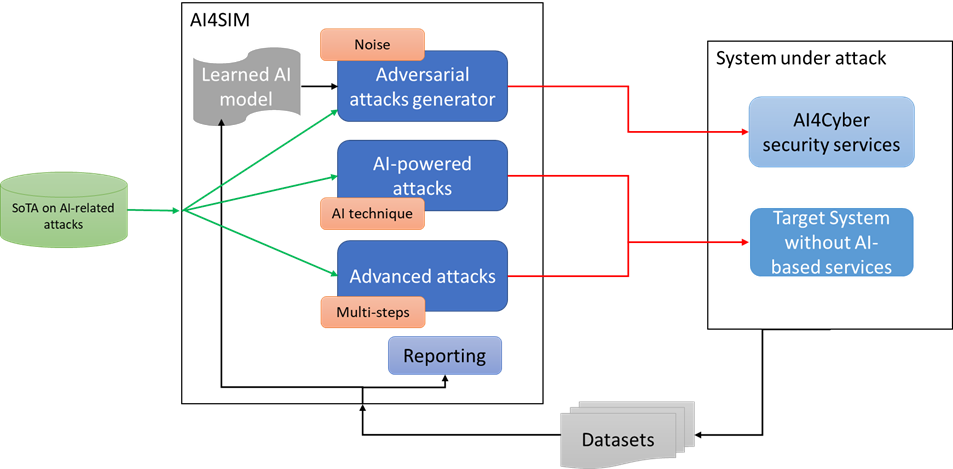

AI4CYBER will build an attack simulation solution (AI4SIM) which will generate advanced AI-powered attacks against realistically emulated use cases’ environments. Defensive adversarial ML techniques will be explored to generate the attacks simulation including GANs and reinforcement learning. Moreover, relevant datasets generated in the attack simulations will be released to the cyber security community to be used as a resource to aid in the enhancement of attack detection and response.

Categorisation of AI-related attacks

During the analysis of the State-of-the-art related to emerging attacks, a total of 50 relevant attacks for the project use cases has been studied. The complete state of the art will be included in deliverable D3.2 CTI and Attack simulation of AI-powered attacks – Initial version (M17 – January 2024). The analysed attacks are grouped in AI4CYBER attack taxonomy into three main categories:

- Adversarial attacks: attacks that refer to deliberate and targeted efforts to exploit vulnerabilities in machine learning models, typically by introducing carefully crafted inputs or perturbations with the aim of deceiving or manipulating the model’s output. These attacks pose significant challenges to the reliability and security of machine learning systems, highlighting the need for robust defence mechanisms to detect, mitigate, and prevent adversarial attacks.

- AI-powered attacks: AI-powered attacks involve the utilisation of artificial intelligence techniques and algorithms to launch malicious activities and exploit vulnerabilities in various systems. These attacks leverage the capabilities of AI to automate and enhance the intelligence or sophistication of the attack strategies, posing significant risks to security, privacy, and trust in AI-driven environments.

- Advanced attacks: Advanced attacks are complex and multifaceted attacks, employing multiple layers, steps, sources, and techniques to target systems or networks. These sophisticated attacks require comprehensive defence strategies that encompass proactive threat detection, robust incident response, and continuous monitoring to effectively mitigate their impact.

Architecture of AI4SIM

The AI4SIM service is logically divided into 3 mains subcomponents that will be further detailed in WP3 deliverables. Each component is responsible for generating a set of attacks that targets the use case systems in the project.

- Adversarial attacks generator: A tool or system designed to create and generate adversarial examples or inputs that can deceive machine learning models. It enables researchers and practitioners to assess the vulnerabilities of models and exploit them.

- AI-powered attacks generator: A tool or system that leverages artificial intelligence techniques to create and generate sophisticated attacks targeting various systems. It enables researchers and security professionals to simulate and understand the potential risks posed by AI-driven attacks and develop robust defence mechanisms.

- Advanced attacks generator: A sophisticated tool or system that generates complex and multi-layered attacks, incorporating multiple steps, sources, and techniques.

These generators can be used to perform penetration testing related to advanced and AI-powered attacks’, enabling to assess the security of target systems even in face of modern attacks.

A last subcomponent, the Reporting module, is intended to provide a report about the attacks generation status, relying on the generated critical system traces to build a consolidated attack/penetration testing report.

Figure 1: AI4SIM architecture

Future Work

The implementation of AI4SIM is progressing and several attacks are being developed by relying on open source and private artefacts and solutions. The delivery of the prototype tool is planned for January 2024 and it will be integrated in the project’s pilots where its emulation efficiency will be tested.

[1] Menéndez, H. D., Bhattacharya, S., Clark, D., & Barr, E. T. (2019). The arms race: Adversarial search defeats entropy used to detect malware. Expert Systems with Applications, 118, 246-260.